The fight against scam ads—by the numbers

This is the second in a series of posts that will provide greater transparency about how we make our ads safer by detecting and removing scam ads. -Ed.

Last month, I shared an overview of the technology Google has built to prevent bad ads from showing on Google and our partner sites, including our efforts to review accounts, sites and ads. To illustrate the scale of this challenge, today I’d like to provide some metrics that give greater insight into the scale of the problem we’re combating.

Bad ads have a disproportionately negative effect on our users; even a single bad ad slipping through our defenses is one too many. That’s why we’re constantly working to improve our systems and utilize new techniques to prevent bad ads from appearing on Google and our partner sites. In fact, billions of ads are submitted every year for a wide variety of products. We have a set of ads policies that cover a huge array of areas in more than 40 different languages. For example, because we aim to show safe, truthful and accurate ads to our users, we don’t allow ads for misleading claims, ad spam or malware.

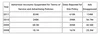

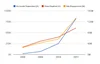

Ads that are in violation of our ads policies aren’t allowed to be shown on Google and our AdSense partner sites. For many repeat offenders, we ban not just ads but also advertisers who seek to abuse our advertising system to take advantage of people. In the case of ads that are promoting counterfeit goods, we typically ban the advertiser after only one violation. Here are some metrics that give some insight into the scale of the impact we have had over time, showing the numbers of actions we’ve taken against advertiser accounts, sites and ads. You can see that the numbers are growing—and growing faster over time.

We find that there are relatively few malicious players, who make multiple attempts to bypass our defenses to defraud users. As we get better and faster at catching these advertisers, they redouble their efforts and create more accounts at an even faster rate.

Even in this ever-escalating arms race, our efforts are working. One method we use to test the success of our efforts is to ask human raters to tell us how we’re doing. These human raters review a set of sites that are advertised on Google. We use a large set of sites in order to get an accurate statistical reading of our efforts. We also weight the sites in our statistical sample based on the number of times a particular site was displayed so that if a particular site is shown more often, it’s more likely to be in our sample set. By using human raters, we can calibrate our automated systems and ensure that we’re improving our efforts over time. In 2011, we reduced the percentage of bad ads by more than 50 percent compared with 2010. That means the proportion of bad ads that are showing on Google was halved in just a year.

Google’s long-term success is based on people trusting our products. We want to make sure that the ads on Google are safe and trustworthy, and we’re not satisfied until we do.