30 years of family videos in an AI archive

My dad got his first video camera the day I was born nearly three decades ago. “Say hello to the camera!” are the first words he caught on tape, as he pointed it at a red, puffy baby (me) in a hospital bassinet. The clips got more embarrassing from there, as he continued to film through many diaper changes, temper tantrums and—worst of all—puberty.

Most of those potential blackmail tokens sat trapped on miniDV tapes or scattered across SD cards until two years ago when my dad uploaded them all to Google Drive. Theoretically, since they were now stored in the cloud, my family and I could watch them whenever we wanted. But with more than 456 hours of footage, watching it all would have been a herculean effort. You can only watch old family friends open Christmas gifts so many times. So, as an Applied AI Engineer, I got down to business and built an AI-powered searchable archive of our family videos.

If you’ve ever used Google Photos, you’ve seen the power of using AI to search and organize images and videos. The app uses machine learning to identify people and pets, as well as objects and text in images. So, if I search “pool” in the Google Photos app, it’ll show me all the pictures and videos I ever took of pools.

But for this project, I needed a couple of features Photos doesn’t (yet!) support. First, because my dad’s first camera recorded footage to miniDV tapes, those videos were uploaded as meaty, two-hour-long movies with no useful metadata. Instead, my dad would start a clip by saying, “let me put a date on the screen here...” and a little white text snippet would appear in the bottom right corner of the frame. In between shots on a single reel, he’d say: “Say goodbye, I’m going to fade out now.” I would scream, “NO, DON’T FADE OUT,” while the screen faded to black. So, my first step was to use machine learning to automatically parse the date shown on the screen, and split the single long video into shorter clips after each fade out.

In this picture, you can see the timestamp shown on screen. Using the Vision API, I could extract it to sort my videos by date.

For this, I turned the Video intelligence API, a Google Cloud tool that lets developers analyze videos with machine learning. It allows you to replicate many of the features found in the Google Photos app—like tagging objects in images and recognizing on-screen text—and a whole lot more. For example, the API’s shot change detection feature automatically finds the timestamps in videos where a scene changes, this allowed me to split those longs videos into smaller chunks.

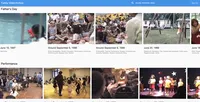

Using the label detection feature, I could search for all sorts of different events, like “bridal shower,” “wedding,” “bat and ball games” and “baby.” By searching “performance,” I was able to finally find one of my life’s proudest accomplishments on tape—a starring role singing “It’s Not Easy Being Green” in my kindergarten’s production of the Sesame Street musical.

My starring role as Kermit the Frog in my school’s Sesame Street musical. The Video Intelligence API tagged it as “performance”.

The Video Intelligence API’s real “killer feature” for me was its ability to do audio transcription. By transcribing my videos, I was able to query clips by what people said in them. I could search for specific names (“Scott,” “Dale,” “grandma”), proper nouns (“Chuck E Cheese”, “Pokemon”), and for unique phrases. By searching “first steps,” I found a clip of my dad saying, “Here she comes… plunk. That’s the first time she’s taken major steps” alongside a video of my managing, just barely, to waddle along.

My first steps that I was able to find with the Video Intelligence API’s Transcription feature. Here, my dad says, “...this is the first time she’s taken major steps.”

In the end, machine learning helped me build exactly the kind of archive I wanted—one that let me search my family videos by memories, not timestamps.

P.S. Want to see how I built it? Check out my technical blog post or catch the video on the Cloud Youtube Channel.